The Role of GPUs in Deep Learning

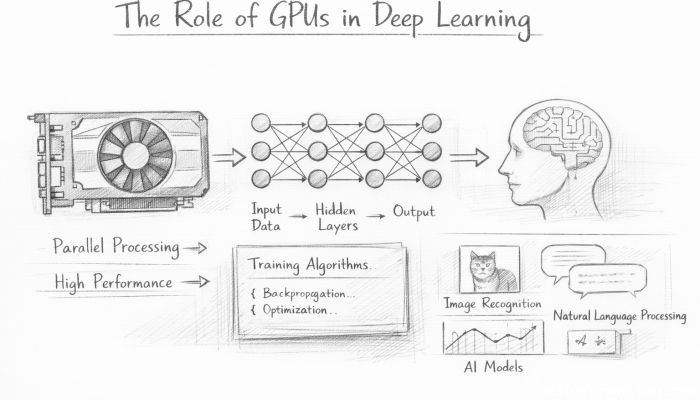

In the rapidly evolving world of artificial intelligence, deep learning has emerged as a groundbreaking approach that enables computers to perform complex tasks such as image recognition, natural language processing, and autonomous driving. Central to the advances in deep learning models is the powerful hardware used to train these systems—primarily Graphics Processing Units (GPUs). Originally designed to accelerate rendering of images and video in gaming and visualization, GPUs have proven indispensable for deep learning due to their unique architecture optimized for parallel processing. This article delves into the pivotal role GPUs play in deep learning, exploring their architecture, benefits, limitations, and how they continue to shape the AI landscape. From understanding why GPUs outpace traditional CPUs in training neural networks, to exploring future trends, this comprehensive exploration reveals the deep connection between hardware innovation and AI breakthroughs.

- Understanding the Basics: What is a GPU?

- The Architecture of GPUs vs CPUs

- Parallel Processing and Matrix Multiplications in Deep Learning

- Speeding Up Neural Network Training

- GPU Memory and Its Implications for Large Models

- Role of GPUs in Inference and Real-time Applications

- Energy Efficiency and Cost Considerations

- GPU Ecosystem: Software and Tool Support

- Multi-GPU and Distributed Training

- The Future of GPUs in Deep Learning: Towards Specialized Hardware

- Challenges and Limitations of GPUs in Deep Learning

- Democratizing Deep Learning Through GPUs

- Conclusion

- More Related Topics

Understanding the Basics: What is a GPU?

A Graphics Processing Unit (GPU) is a specialized electronic circuit originally engineered for rendering images and videos by performing multiple calculations simultaneously. Unlike a Central Processing Unit (CPU), which is optimized for sequential serial processing, a GPU consists of thousands of smaller cores designed for handling multiple tasks in parallel. This parallelism renders GPUs exceptionally capable of managing high-dimensional matrix operations, which are at the core of deep learning computations. As deep learning models involve processing large datasets and performing repeated mathematical operations, GPUs naturally became the hardware of choice to accelerate training and inference processes.

The Architecture of GPUs vs CPUs

To appreciate why GPUs are so well-suited for deep learning, we must compare their architectures with CPUs. CPUs typically consist of a few cores optimized for sequential serial processing with large cache memories, designed to handle a variety of versatile tasks efficiently. In contrast, GPUs pack thousands of smaller, efficient cores optimized for running multiple threads simultaneously, making them highly parallel devices. This parallelism makes GPUs highly efficient for the matrix and vector calculations that dominate deep learning workloads. Additionally, the memory bandwidth in GPUs is significantly higher than that of CPUs, allowing the swift movement of large volumes of data during training.

Parallel Processing and Matrix Multiplications in Deep Learning

At the heart of deep learning are operations such as matrix multiplications, convolutions, and activation functions, which require handling vast multidimensional data. When training neural networks, the models perform numerous matrix multiplications iteratively across layers, necessitating immense computational power. GPUs excel in these tasks because their hundreds or thousands of cores can perform many arithmetic operations simultaneously, drastically reducing the time needed for training. This parallel processing capability is especially beneficial when working with large-scale neural networks that contain millions or billions of parameters.

Speeding Up Neural Network Training

Training a deep learning model often involves processing millions of data points multiple times (epochs) to minimize errors and optimize parameters. Without GPUs, this process could take weeks or months on traditional CPU systems. GPUs accelerate this training by enabling the simultaneous processing of large batches of data and model parameters, thereby compressing the training timelines. Frameworks like TensorFlow, PyTorch, and MXNet have integrated GPU support to harness this computing power effectively. By reducing training time from days to mere hours or even minutes, GPUs have played a crucial role in making deep learning practical and accessible.

GPU Memory and Its Implications for Large Models

Memory capacity and bandwidth are critical aspects of deep learning hardware. GPUs typically have dedicated high-speed memory called VRAM (Video RAM), which allows them to quickly access and store intermediate calculations during training. However, as models grow larger and datasets become more complex, memory limitations can become a bottleneck. Advances such as memory pooling, gradient checkpointing, and model parallelism help circumvent these limitations by efficiently managing and distributing workloads. Moreover, newer GPUs are equipped with substantially larger VRAM capacities, enabling the training of more complex, data-intensive models.

Role of GPUs in Inference and Real-time Applications

While training deep learning models is computationally intensive, inference—the process of applying a trained model to new data—also benefits greatly from GPU acceleration. Many real-time applications such as voice assistants, facial recognition systems, and recommendation engines rely on rapid inference to offer seamless user experiences. GPUs can handle hundreds or thousands of inference requests simultaneously, significantly reducing latency and enabling more sophisticated AI applications to be deployed at scale. Edge GPUs and specialized inference accelerators are increasingly emerging to meet the demands in mobile and embedded AI deployment.

Energy Efficiency and Cost Considerations

One often-overlooked aspect of GPUs in deep learning is their energy efficiency compared to CPUs when performing parallel computations. Though GPUs consume substantial power, the time saved in training directly translates to lower overall energy consumption for completing tasks. Additionally, the cost per computation is significantly reduced, making GPUs a cost-effective solution for organizations conducting large-scale AI research. Cloud service providers offering GPU-based instances have further democratized access to this computing power, allowing startups and individual researchers to leverage GPUs without massive upfront investments in hardware.

GPU Ecosystem: Software and Tool Support

The rise of GPUs in deep learning is closely tied to the development of sophisticated software frameworks and tools that enable developers to exploit GPU capabilities easily. CUDA, developed by NVIDIA, is a parallel computing platform and programming model that allows developers to run computations on GPUs. Similarly, various libraries and APIs like cuDNN (CUDA Deep Neural Network library) offer optimized routines for common deep learning operations. The synergy between powerful hardware and supportive software ecosystems has greatly accelerated research and commercial deployment of AI applications globally.

Multi-GPU and Distributed Training

As datasets and model sizes increase exponentially, single GPUs may become insufficient for training demands. Multi-GPU setups and distributed training strategies allow workloads to be split and processed across multiple GPUs—either within a single machine or across networked clusters. This partitioning facilitates training ultra-large models such as GPT and BERT variants while maintaining training efficiency. Technologies like NVIDIA’s NVLink enable high-speed communication between GPUs to reduce latency and synchronize model updates, further boosting performance.

The Future of GPUs in Deep Learning: Towards Specialized Hardware

While GPUs currently dominate the deep learning domain, the future landscape may see specialized hardware such as Tensor Processing Units (TPUs), Neural Processing Units (NPUs), and custom ASICs (Application-Specific Integrated Circuits) becoming more prominent. These chips are designed specifically for AI workloads and can offer even better performance and energy efficiency for particular use cases. Nevertheless, GPUs continue to evolve, with improvements in architecture, memory, and integration of AI-optimized cores. Their flexibility, mature ecosystem, and widespread adoption ensure they will remain a backbone of deep learning for the foreseeable future.

Challenges and Limitations of GPUs in Deep Learning

Despite their advantages, GPUs still present certain limitations in deep learning applications. Issues such as thermal management, hardware cost, and accessibility in low-resource environments can pose challenges. Moreover, the learning curve associated with GPU programming can be steep, requiring specialized knowledge for optimization. Additionally, the ever-increasing size of state-of-the-art models stresses even the most advanced GPUs, demanding innovative solutions like model pruning, quantization, and mixed precision training to balance performance with resource constraints.

Democratizing Deep Learning Through GPUs

The proliferation of GPUs has not only accelerated AI research but also democratized access to deep learning technologies. Cloud platforms like AWS, Google Cloud, and Microsoft Azure provide on-demand GPU access, allowing developers worldwide to train complex models without investing in expensive hardware. This accessibility has fueled innovation across industries—from healthcare and finance to entertainment and robotics—helping even small teams and individuals create impactful AI solutions. The ongoing reduction in GPU costs and the rise of educational resources are enabling more people to engage with deep learning than ever before.

Conclusion

GPUs have fundamentally transformed the field of deep learning by providing the necessary computational horsepower to train and deploy complex neural networks efficiently. Their unique parallel architecture, high memory bandwidth, and rapid evolution have made GPUs the de facto standard in AI hardware. By dramatically speeding up training times and enabling real-time inference, GPUs have unlocked new possibilities across numerous applications and industries. While future specialized AI hardware may reshape the landscape, the widespread ecosystem support and scalable power of GPUs ensure they remain crucial to deep learning’s continued growth. As the demand for more sophisticated models grows, GPUs will continue to play an indispensable role in driving innovation and expanding the horizons of artificial intelligence.

Big O Notation Explained for Beginners

Big O Notation Explained for Beginners

AI in Gaming: Smarter NPCs and Environments

AI in Gaming: Smarter NPCs and Environments

Understanding Bias in AI Algorithms

Understanding Bias in AI Algorithms

Introduction to Chatbots and Conversational AI

Introduction to Chatbots and Conversational AI

How Voice Assistants Like Alexa Work

How Voice Assistants Like Alexa Work

Federated Learning: AI Without Sharing Data

Federated Learning: AI Without Sharing Data