The Importance of Data Quality in AI

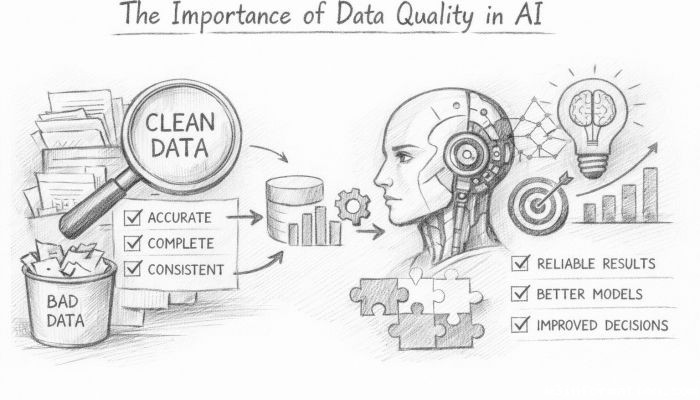

In the rapidly evolving landscape of artificial intelligence (AI), data serves as the foundational fuel powering intelligent systems. The old adage "garbage in, garbage out" has never been more relevant; the quality of data fed into AI algorithms fundamentally shapes their performance, reliability, and ethical outcomes. As organizations increasingly rely on AI to drive decision-making, automation, and innovation, understanding the importance of data quality has become paramount. This article delves into why data quality is crucial for AI success, exploring the elements that define good data, the challenges posed by poor data, and strategies to ensure integrity throughout the data lifecycle. Through this comprehensive analysis, readers will gain insights into how prioritizing data quality enables trustworthy, efficient, and impactful AI applications across industries.

- Defining Data Quality in the Context of AI

- The Role of Data Quality in Model Accuracy

- Data Bias and Its Impact on AI Outcomes

- The Cost of Poor Data Quality in AI Projects

- Data Collection Methods and Their Influence on Quality

- Data Preprocessing: Cleaning and Transformation

- Data Annotation and Labeling Accuracy

- The Importance of Data Diversity and Representativeness

- Real-time Data Quality Management

- Regulatory and Ethical Standards Driving Data Quality

- Technologies and Tools for Enhancing Data Quality

- Future Trends: Data Quality and the Evolution of AI

- Conclusion

- More Related Topics

Defining Data Quality in the Context of AI

Data quality refers to how well data meets the needs of its intended use, encompassing attributes such as accuracy, completeness, consistency, timeliness, and relevance. Within AI, data quality not only means error-free and clean data but also data that is representative and unbiased to ensure fair and generalizable AI models. Unlike traditional data analytics, where minor imperfections might be tolerable, AI systems—especially those grounded in machine learning—rely heavily on vast datasets where subtle data flaws can dramatically skew results or propagate biases. Therefore, an effective definition of data quality in AI must consider dimensions like validity, uniqueness, and granularity to facilitate model training that leads to optimal, trustworthy outcomes.

The Role of Data Quality in Model Accuracy

The accuracy of AI models—whether in computer vision, natural language processing, or predictive analytics—depends primarily on high-quality input data. Models trained on clean, precise, and relevant datasets generally exhibit superior predictive performance. For instance, mislabeled images or corrupted textual data can confuse learning algorithms, resulting in incorrect classifications or faulty predictions. Furthermore, comprehensive datasets that cover the full spectrum of possible scenarios equip models to generalize better when encountering new data. This is why systematic data validation, cleansing, and verification processes are essential steps before and during AI model development.

Data Bias and Its Impact on AI Outcomes

Data collected from real-world sources often reflects existing societal biases, which can inadvertently be amplified by AI models trained on such datasets. Poor data quality in the form of unbalanced representation—such as underrepresenting minority groups—can lead to biased AI decisions that perpetuate inequality, discrimination, or unfair treatment. An example is facial recognition systems with lower accuracy rates for certain demographic groups due to skewed training data. Good data quality must therefore include ethical considerations that focus on diversity and fairness, minimizing bias through deliberate dataset curation and augmentation.

The Cost of Poor Data Quality in AI Projects

The ramifications of poor data quality extend far beyond reduced model accuracy. In AI projects, low-quality data can lead to faulty business decisions, wasted resources, and diminished stakeholder trust. For example, errors in financial forecasting models or healthcare diagnostics due to unreliable data might incur significant monetary losses or jeopardize human safety. Additionally, the need to re-collect, re-clean, or retrain AI models adds considerable time and cost burdens. Organizations must recognize that investing in data quality early on is not merely a technical concern but a strategic priority to avoid costly consequences.

Data Collection Methods and Their Influence on Quality

How data is collected profoundly affects its subsequent quality. The methods used—whether automated sensors, web scraping, surveys, or manual entry—introduce varying degrees of noise, errors, or bias. Automated data collection can generate large volumes fast but may also capture irrelevant or flawed information if not properly calibrated. Survey data, while potentially rich in nuance, can suffer from human error or biased responses. Therefore, transparency and rigor in data acquisition protocols, combined with ongoing validation, are critical to preserving data integrity from the outset.

Data Preprocessing: Cleaning and Transformation

Raw data rarely arrives in a state ready for AI consumption; preprocessing is vital to enhance data quality. This phase includes cleaning steps such as removing duplicates, correcting errors, handling missing values, and standardizing formats. Additionally, normalization and feature engineering transform raw data into meaningful variables, improving the learning process. Meticulous preprocessing not only refines data but also helps prevent model overfitting and reduces signal noise. Without this crucial step, AI algorithms may internalize irrelevant patterns or systemic data flaws.

Data Annotation and Labeling Accuracy

For supervised machine learning models, labeled data is indispensable. Accurate and consistent data annotation allows AI systems to learn the correct associations between inputs and outputs. However, human annotators are prone to mistakes or subjective judgments, which can degrade dataset quality if unchecked. Crowdsourcing these tasks introduces variability in labeling standards. Hence, rigorous quality assurance processes—including inter-annotator agreement measures, consensus mechanisms, and expert reviews—are essential to ensure the reliability of labels that underpin model training.

The Importance of Data Diversity and Representativeness

AI models perform best when trained on data that accurately reflects the environments in which they operate. Diversity and representativeness in datasets prevent model myopia and improve adaptability across different contexts. For example, an AI-powered healthcare diagnostic tool trained solely on data from one ethnic group may fail when applied more broadly. Thus, quality data must encompass various demographics, conditions, and scenarios to support inclusive, robust AI. Data augmentation and synthetic data generation have emerged as tools to improve diversity when genuine data is scarce.

Real-time Data Quality Management

For AI systems that rely on streaming or real-time data—such as autonomous vehicles or fraud detection systems—maintaining data quality dynamically becomes crucial. Anomalies, latency, or malicious data injection can drastically impair performance or cause harmful decisions. Continuous monitoring, validation, and automated correction mechanisms are necessary to uphold the quality standards needed for real-time AI applications. This ongoing vigilance also requires scalable data infrastructures and sophisticated tools designed for rapid data quality assessment.

Regulatory and Ethical Standards Driving Data Quality

Growing concerns about privacy, transparency, and accountability have led to regulatory frameworks—like GDPR and the AI Act—that indirectly reinforce the importance of data quality. Regulations demand clear provenance, accuracy, and fairness in datasets used by AI systems, pushing organizations to implement systematic data governance practices. Ethical AI development further encourages stewardship around data collection, usage, and security, ensuring that high-quality data is managed responsibly to protect individual rights and societal values.

Technologies and Tools for Enhancing Data Quality

Advances in technology have spawned a range of tools designed to automate and improve data quality management. From AI-driven data profiling and anomaly detection to data lineage tracking and metadata management platforms, organizations have an arsenal to address the complexities of large-scale data quality assurance. Machine learning itself is leveraged to detect data inconsistencies or optimize cleaning workflows, creating powerful feedback loops. Selecting and integrating these tools effectively demands an understanding of specific data challenges and organizational goals.

Future Trends: Data Quality and the Evolution of AI

As AI continues to evolve toward greater autonomy and complexity, the demands on data quality will intensify. Emerging fields like federated learning rely on decentralized data sources, raising new challenges in ensuring consistent quality without compromising privacy. Additionally, synthetic data and AI-generated datasets will become more prevalent, requiring novel metrics and standards for assessing quality. Ultimately, data quality will remain a cornerstone of trustworthy AI, necessitating innovative approaches that combine technical rigor with ethical foresight.

Conclusion

The importance of data quality in AI cannot be overstated. Quality data is the bedrock upon which the accuracy, fairness, and reliability of AI systems are built. From defining what constitutes high-quality data to exploring the consequences of poor data, this article highlights a multifaceted understanding vital for successful AI deployment. Ensuring data is accurate, unbiased, comprehensive, and timely enables models to perform optimally and ethically, avoiding pitfalls that could undermine AI’s transformative potential. As AI technologies integrate ever more deeply into daily life and business, prioritizing data quality through robust governance, advanced tools, and ethical practices will be indispensable in shaping a future where AI benefits all stakeholders equitably and sustainably.

Big O Notation Explained for Beginners

Big O Notation Explained for Beginners

AI in Gaming: Smarter NPCs and Environments

AI in Gaming: Smarter NPCs and Environments

Understanding Bias in AI Algorithms

Understanding Bias in AI Algorithms

Introduction to Chatbots and Conversational AI

Introduction to Chatbots and Conversational AI

How Voice Assistants Like Alexa Work

How Voice Assistants Like Alexa Work

Federated Learning: AI Without Sharing Data

Federated Learning: AI Without Sharing Data